Case Study: How Cinder Helped Black Forest Labs Launch FLUX.2 Without Compromising on Safety

Black Forest Labs was preparing to launch FLUX.2, their latest family of generative AI models, to millions of developers. Black Forest Labs is committed to preventing adversarial misuse, including to generate child sexual abuse material and non-consensual intimate imagery.

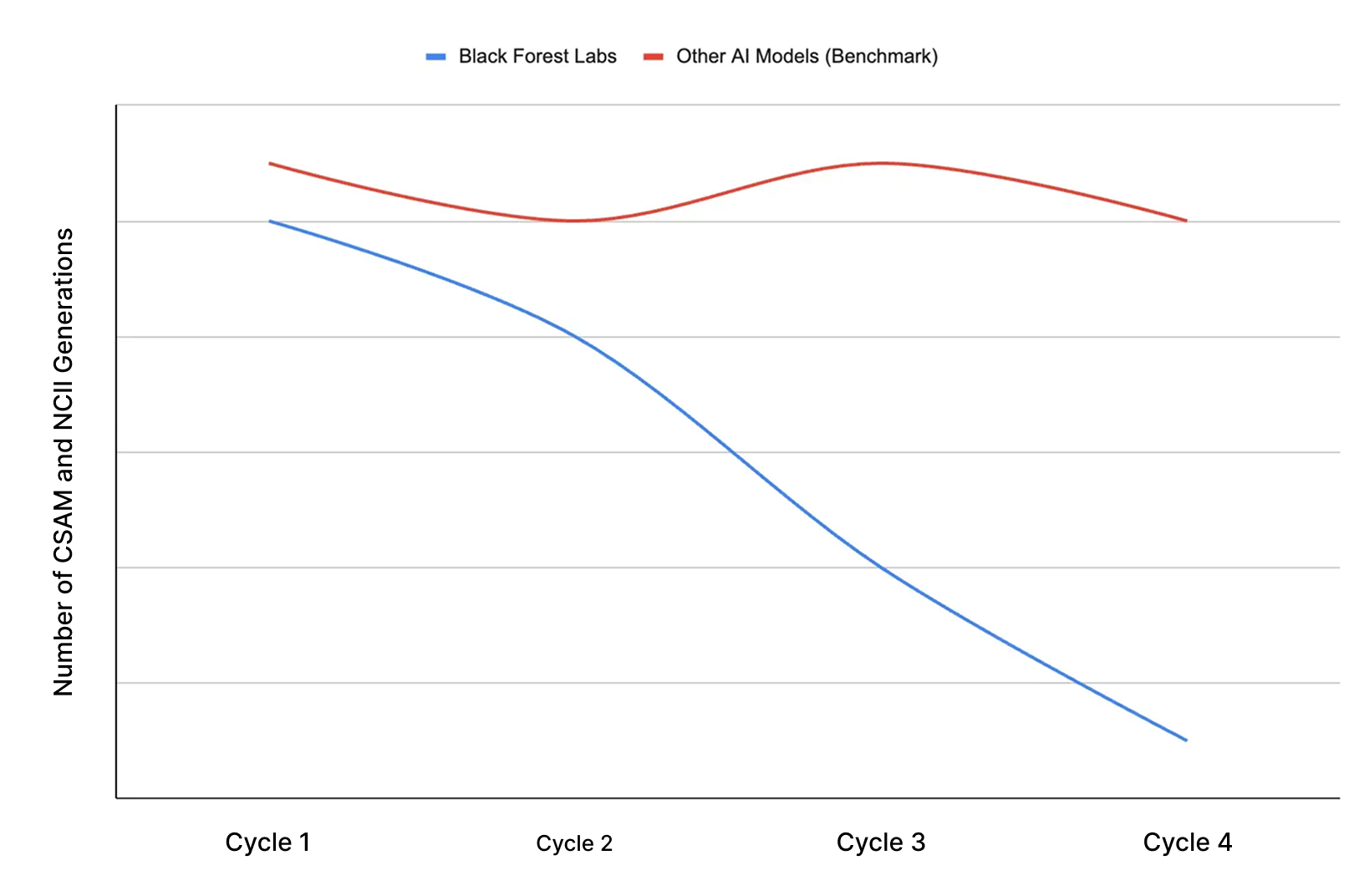

Cinder red-teamed the model across four iterative cycles, observing >90% reduction in harmful outputs and 10x safer performance than industry peers. Evaluation turnaround was sub 48 hours.

Results

- >90% reduction in CSAM and NCII vulnerability across text-to-image and image-to-image attack vectors

- 10x safer than benchmark industry models at launch

- Sub-48 hour turnaround

The outcome: Black Forest Labs launched one of the most significant open-weight and closed-source model releases in generative visual AI with confidence their safety posture could withstand real-world adversarial misuse.

"Cinder provided rigorous adversarial testing that matched our release velocity. Their team found important edge cases and helped us address them before launch."

–Ben Brooks, Head of Public Policy, Black Forest Labs

Why Black Forest Labs Chose Cinder

Speed at enterprise quality. Cinder delivered comprehensive adversarial evaluations in under 48 hours, not weeks, without sacrificing rigor or attack sophistication.

Adversarial depth. Cinder's red team deployed advanced attack techniques across linguistic evasion, semantic manipulation, and multi-modal exploits that automated tools and ad hoc red teams routinely miss.

Quantifiable benchmarking. Identical prompt sets applied across Black Forest Labs and competitor models provided objective proof of improvement, not anecdotal claims.

Pre-launch, not post-mortem. Issues were identified and resolved before public release and not after a crisis.

The Approach

Cinder evaluated both text-to-image and image-to-image generation across the two highest-severity harm categories for generative AI.

CSAM (Child sexual abuse material)

NCII (Non-consensual intimate imagery of identifiable individuals)

Four iterative evaluation cycles, each targeting different attack sophistication levels. Every unsafe output categorized. Successful defense analyzed. Vulnerability patterns shared with Black Forest Labs' research team.

No performance degradation. No creative quality sacrificed.

What This Unlocked

Black Forest Labs shipped FLUX.2 knowing their safety posture was validated against real-world threats, not just internal testing.

Black Forest Labs developed a robust evidence base to support a public launch.

Their customers had the confidence to deploy at scale.

The ecosystem proved that open-weight models can be both safe and performant.

Cinder and Black Forest Labs continue expanding their partnership to new modalities and emerging attack vectors as generative AI evolves.